ARTICLE 2 OF 6

Your Databricks Deployment Will Cost You More Than You Think. Here Is the Full Maths.

Have you ever tried to get a straight answer on what Databricks will actually cost you at scale?

Not the entry price. Not the POC. The real number — five years in, 1,000 users, production AI and analytics running — with a BI layer, a data catalog, streaming pipelines, identity management, and a full team of engineers who can actually operate all of it.

Most data leaders cannot answer that question with confidence until they are already committed. By then, the DBU meter is running and the hiring plan is approved.

This article gives you that number before you sign anything — platform costs, people costs, and the integration overhead that every Databricks TCO analysis conveniently omits.

Let me be clear upfront: Databricks is a serious engineering platform built by exceptional people. The Lakehouse architecture is genuinely innovative. What I am examining is not the technology — it is the full cost of operating it in production, which is a very different conversation.

Many investors note that making a platform at low cost is not a big deal — technology is with hundreds of companies, but it is the GTM that matters. That is a fair point. But eventually, everything has to be paid by the customers or investors. BDB is GTM Ready now with its 11.0 version.

The Four Cost Forces — and Why They Compound

Most published Databricks TCO models account for one cost layer: platform licensing. The reality has four, and they all scale in the same direction.

💰

Platform Licensing

DBU consumption grows non-linearly with data volume and concurrent users. The visible part of the iceberg.

👥

People Cost

Specialist Spark engineers, MLOps, cloud infra, BI tooling, and governance admins — each a separate, senior hire. Sits below the waterline.

🔗

Integration Overhead

Each best-of-breed component requires its own operator and integration layer to hold the assembly together. Rarely appears in an RFP.

📈

Compounding Scale

At higher data volumes, all three forces above grow simultaneously — the gap between a multi-vendor stack and an integrated platform widens materially.

Platform cost is the visible part of the iceberg. People cost and integration overhead are what sit below the waterline.

The People Problem Nobody Puts in the Slide

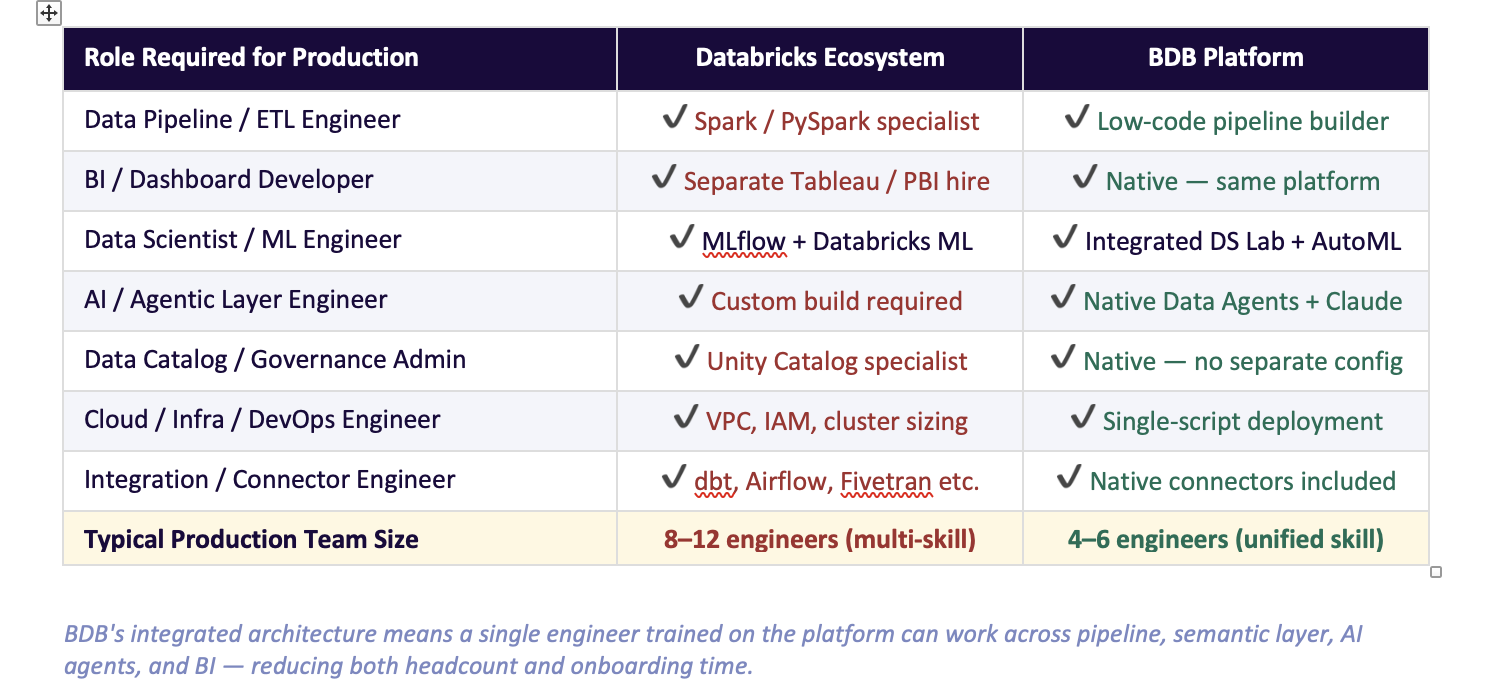

Here is the question that rarely appears in a platform RFP: how many engineers does this platform require to run in production, and what do they cost?

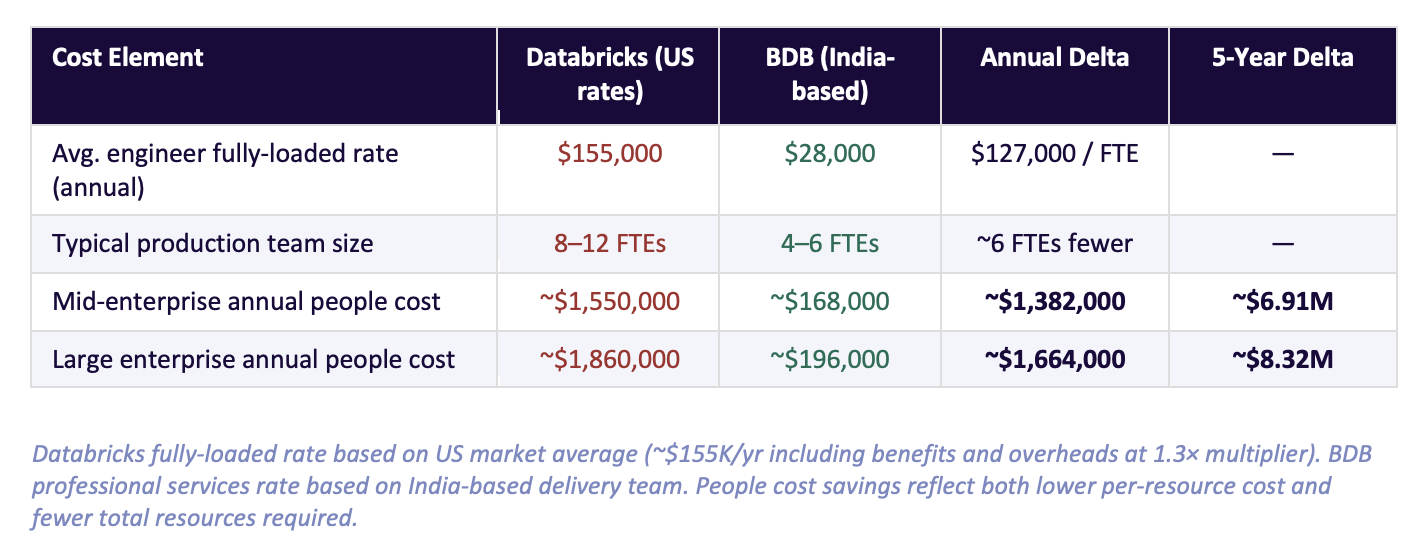

A Databricks production deployment is not operated by one generalist. It requires a team spanning at least five distinct skill profiles — Spark engineering, MLOps, cloud infrastructure, BI tooling, and governance administration. These are specialist roles, and in the US and European markets, specialist roles carry specialist salaries.

The contrast is structural, not incidental. Because Databricks is an assembly of best-of-breed components rather than a single integrated system, each component requires its own operator. BDB's unified architecture means a skilled BDB engineer covers ground that would require three to four separate Databricks specialists.

Here is what that difference costs over five years-

The saving is not just that BDB engineers cost less per hour. It is that you need fewer of them — because the platform does not require an integration team to hold it together.

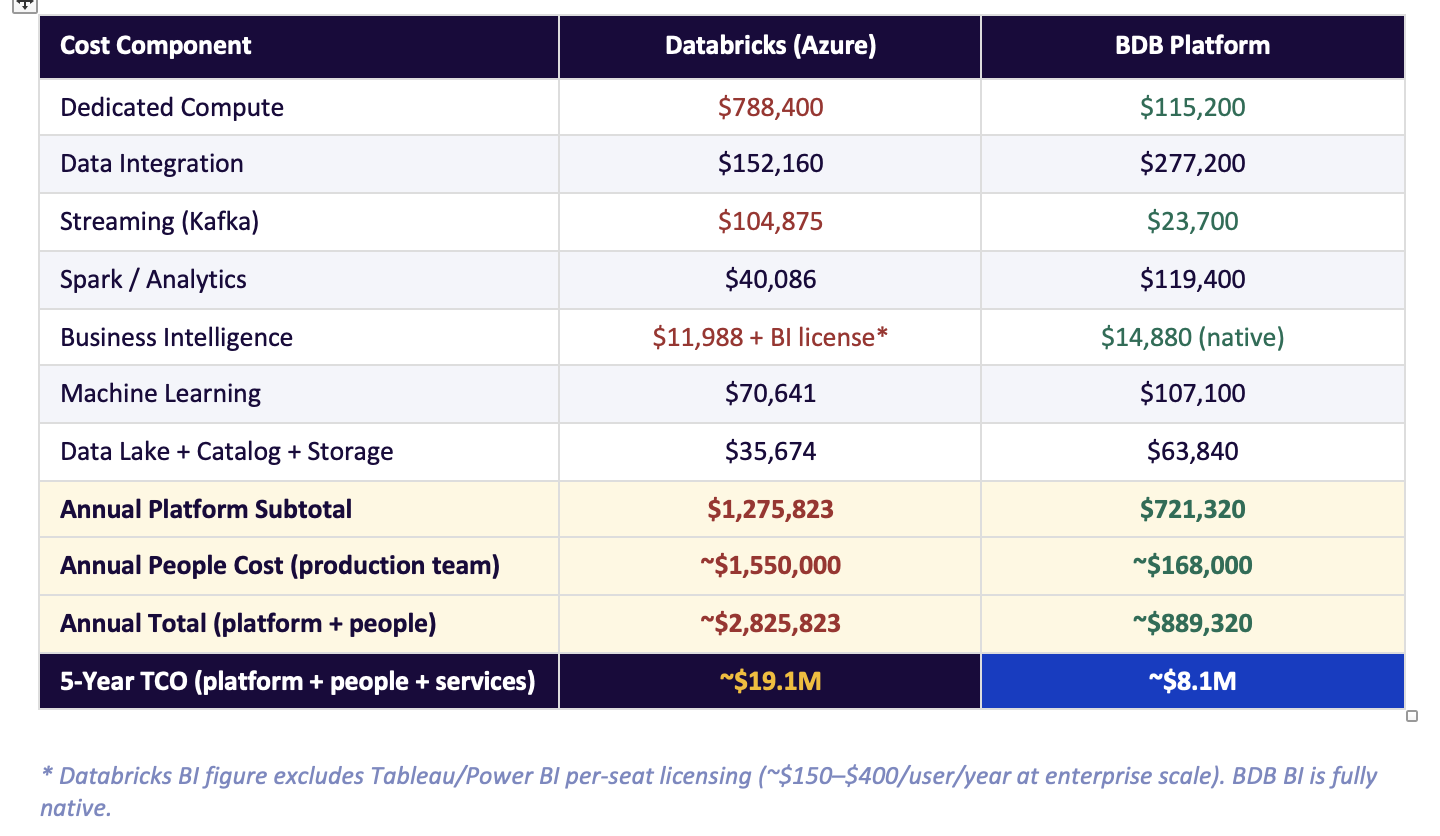

The Numbers, Side by Side — With People Included

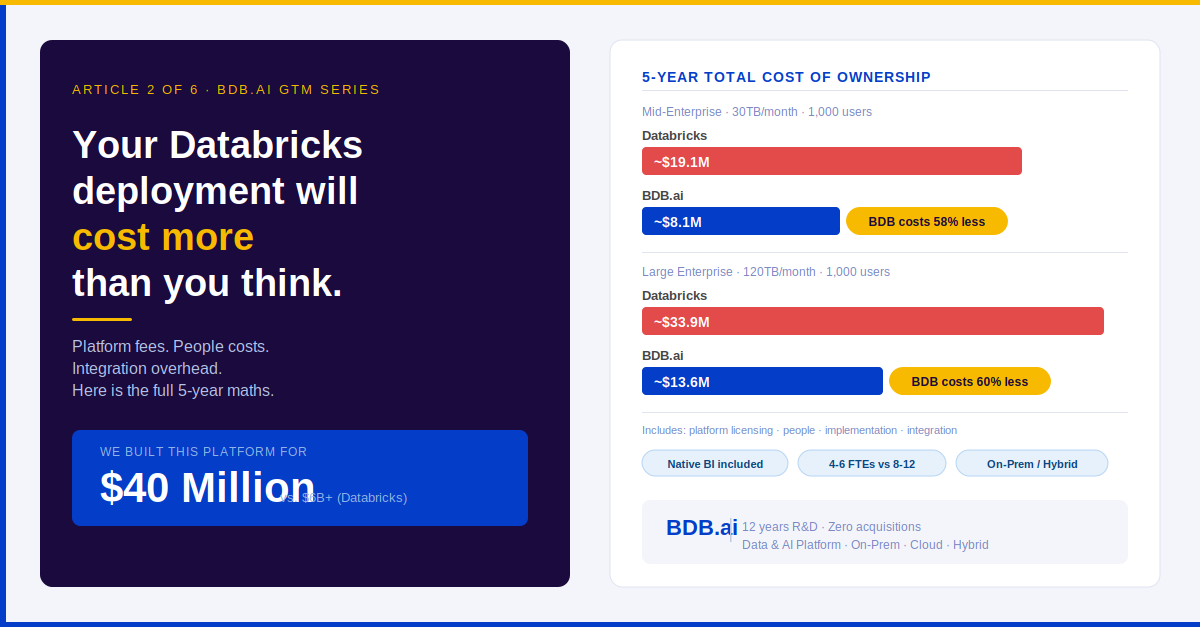

Mid-Enterprise: 30TB/month · 1,000 Users ·

Five-year view, including platform costs, people costs, and implementation services:

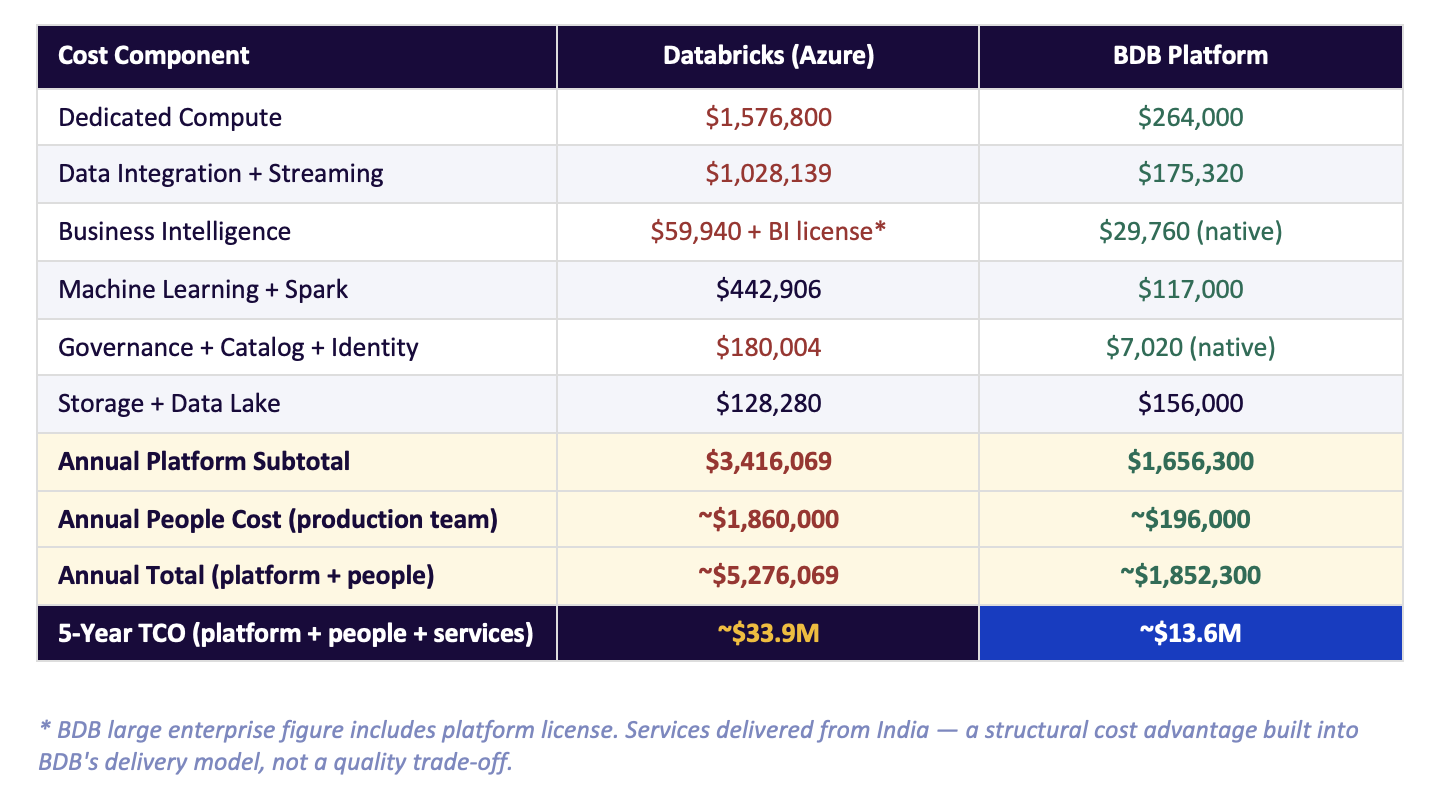

Large Enterprise: 120TB/month · 1,000 Users · 5-Year View

The gap widens materially at scale, as DBU consumption, team complexity, and integration overhead all grow simultaneously:

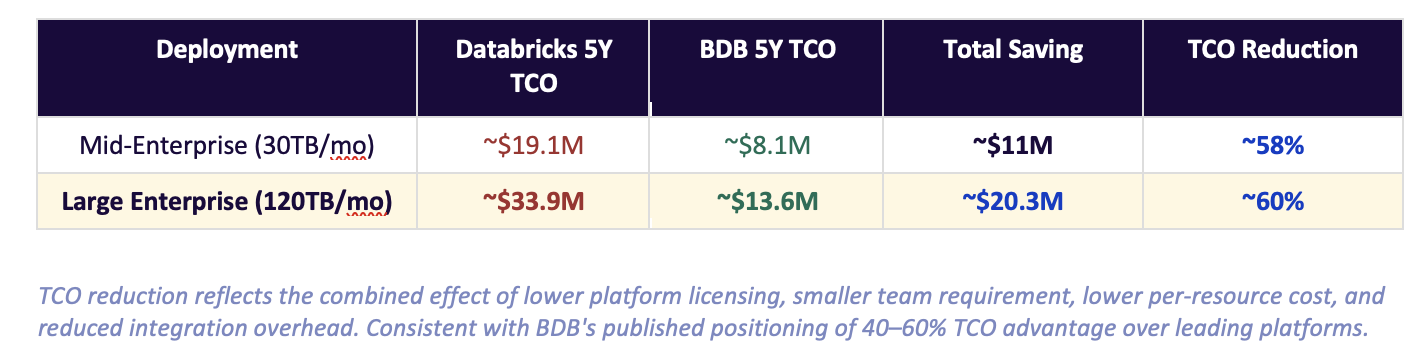

The 5-Year TCO Summary

When platform, people, and services are combined into a true 5-year total cost of ownership:

TCO reduction reflects the combined effect of lower platform licensing, smaller team requirement, lower per-resource cost, and reduced integration overhead. Consistent with BDB's published positioning of 40–60% TCO advantage over leading platforms.

The 58–60% saving range is not a marketing claim. It is the arithmetic outcome of replacing a multi-vendor assembly with one integrated platform operated by a leaner, lower-cost team — consistent with BDB's published positioning of a 40–60% TCO advantage over leading platforms.

One More Factor: Where Your Data Actually Lives

There is a dimension the platform TCO comparison above does not capture: deployment flexibility — and the security and compliance implications that come with it.

Databricks is architected as a cloud-native platform. It runs on AWS, Azure, or GCP. If your organisation has requirements that prevent data from residing in a hyperscaler environment — regulatory mandates, sovereign data laws, defence and government sector rules, or simply a security posture that requires on-premise control — Databricks is not a viable option without significant architectural workarounds.

BDB is built for all three deployment models:

🏢

On-Premises

Full platform deployment within your own data centre. No data leaves your perimeter. The deployment model for banking, healthcare, government, and defence.

☁️

Cloud

Deployed on AWS, Azure, GCP, or any Indian cloud provider. Full platform capability with no cloud-vendor lock-in.

🔀

Hybrid

Data processing on-premises with cloud burst capability for peak workloads. On-premise governance with cloud analytics layers.

For enterprise customers in banking, telecom, healthcare, and government — verticals where BDB operates globally — the on-premises and hybrid options are often the reason BDB is in the conversation at all. The 50%+ TCO advantage is compelling. The ability to deploy in a private data centre when the regulator requires it is non-negotiable.

This is not a capability Databricks can replicate with a configuration flag. It is an architectural choice that was made at the beginning of BDB's design — and it reflects twelve years of working with regulated enterprise environments where security is not a checkbox but a first principle.

Cloud-only is not a feature. For regulated industries, it is a disqualifier. BDB's deployment flexibility is itself a competitive differentiator that does not appear in any cost table.

What Databricks Is Genuinely Right For

I want to be direct about where this comparison points the other way.

If your primary workload is large-scale Spark data engineering — heavy transformation, complex Delta Lake pipelines, GPU-scale ML model training — and you have a strong in-house team that knows the Databricks ecosystem deeply, it is a powerful choice. The notebook experience is excellent. The MLflow integration is mature. The community is large.

The 5-year cost curve tilts sharply when you need the full analytics stack on top — governance, self-service BI, agentic AI, mobility — and you are assembling those layers from separate vendors. That is when the people cost and integration overhead in the tables above become real line items in your budget.

Three Questions Worth Asking Before You Commit

If you are in an active Databricks evaluation or approaching a renewal, here are three things worth verifying with your own numbers:

1

What does the team plan look like at year 3?

Not the pilot team — the production operations team. Cost every role at current market rates and include it in the TCO.

2

What BI tool are you adding on top, and what is the per-seat cost at 1,000 users?

Include it in the platform cost, not a separate budget line.

3

Does your organisation have any regulatory, sovereign data, or security requirements that mandate on-premise or hybrid deployment?

If yes, verify your platform options before the procurement process advances.

We are happy to run a side-by-side TCO analysis for your specific scenario — including the people model. If the numbers favour Databricks for your use case, we will tell you that. The goal is to help you make an informed decision, not to win a slide comparison.

Next in this series: Article 3 examines the Semantic Layer — the most undervalued capability in enterprise analytics, and why platforms that treat it as an afterthought are creating hidden data debt for their customers.

Databricks Deployment Will Cost You More Than You Think